Abstract

We propose a method to control material attributes of objects like roughness, metallic, albedo, and transparency in real images. Our method capitalizes on the generative prior of text-to-image models known for photorealism, employing a scalar value and instructions to alter low-level material properties. Addressing the lack of datasets with controlled material attributes, we generated an object-centric synthetic dataset with physically-based materials. Fine-tuning a modified pre-trained text-to-image model on this synthetic dataset enables us to edit material properties in real-world images while preserving all other attributes. We show the potential application of our model to material edited NeRFs.

Parametric Control of Material Attributes

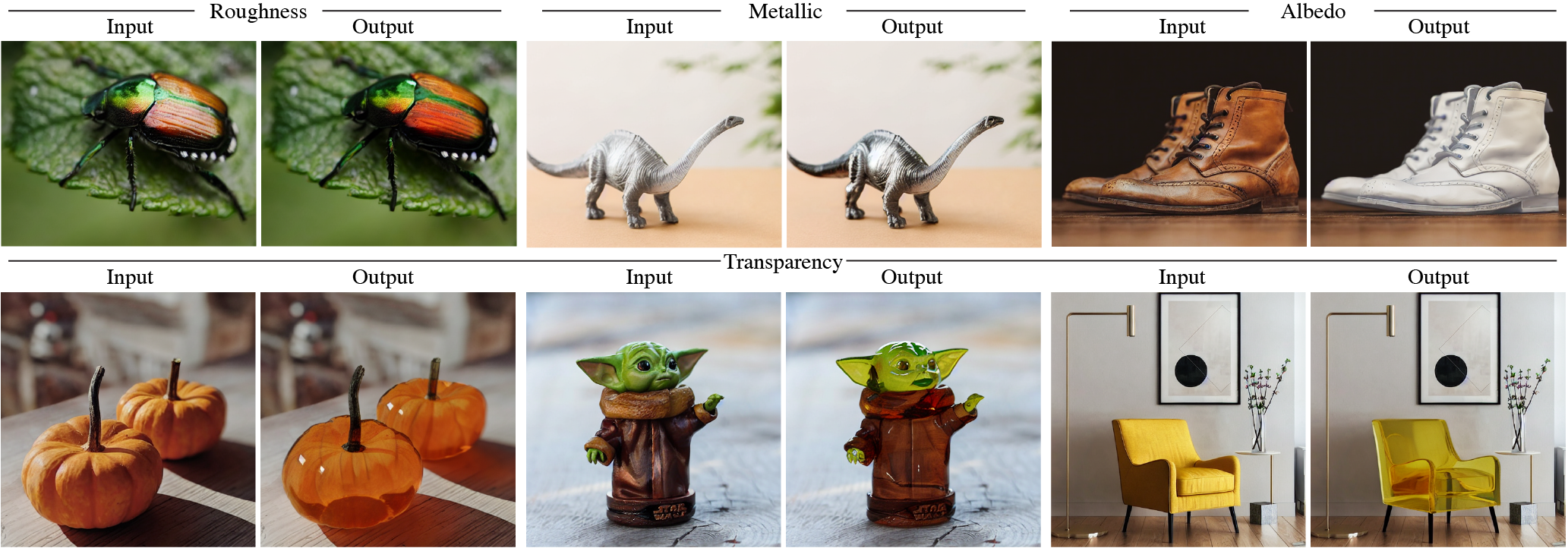

We present the results of our method for editing roughness, metallic, albedo, and transparency of objects in

unseen real images. As the relative attribute strength is linearly changed, the results show smooth edits changing

only the desired material property while maintaining the high-level semantics and other information in the image.

Note: Please consider going full screen to observe the

subtle changes in the material properties, especially in the case of roughness and metallic.

Roughness

Transparency

Metallic

Albedo

Spatial Localization

We can limit the effect of our material edit to the segmentation of a particular object. As shown below, in case of two cats or two cups, we can use the segmentation maps for a specific instance to mask the relative material change.

Comparison Video Results

We compare our method to InstructPix2Pix prompt-only trained on our data (Baseline) on unseen real images for

linearly varying values for the relative attribute strength.

Observe the smooth transitions produced by our method as opposed to flickering transitions in case of the

baseline.

Note: Consider viewing on full screen to observe the subtle changes in the material properties,

especially in the case of roughness and metallic.

Roughness

Transparency

Metallic

Albedo

Material Editing in NeRFs

Material editing of NeRF on a selection of scenes from the DTU MVS. We edit training images to have reduced

albedo or higher specular reflections. We then train a vanilla NeRF configuration and observe highly plausible

3D

structure with the intended albedo, roughness, and metallic changes.

Note: These orbit animation views are outside of the training distribution, and thus the large

amount of floaters are not unexpected, nor the background artifacts as the near-far bounds are set for the

foreground only.